GCP: Core Services Notes

Cloud Definition⌗

- On Demand Resources

- Broad Network Access (from anywhere)

- Resource Pooling (between many customers)

- Rapid Elasticity

- Measure Service: Pay as needed

- IaaS: Compute Engine

- PaaS: AppEngine serverless

Google’s Network carries 40% of the world’s traffic??! [citation needed]

15 GCP Regions currently

Google Cloud Platform’s products and services can be broadly categorized as compute, storage, big data, machine learning, networking and operations and tools.

Google Organization Management⌗

Ownership policies are hierarchal, so from a Google(email) organization, all authority trickles down through folders, GCP Projects, and then to GCP Resources. However, Ownership can be added to an account at the Folder or Project level, and is not revoked by the inherited lower authorization.

The policies implemented at a higher level in this hierarchy can’t take away access that’s granted at a lower level.

IAM⌗

Accounts are always tied to an Google Email account address.

- Who

- Can do what?

- On which Resource?

IAM provides primitive roles to ease configuration.

-

Viewer: Read only access to service. Examine, but not change its state.

-

Editor: Deploy applications, modify code, configure services, etc. Allows change in state.

-

Owner: Invite members, Remove members, create/delete projects, etc

-

Billing Administrator: manage billing, Add/Remove other billing users.

These roles are an easy start, but are not granular enough for many authorization cases within an organization. Difficult to configure Least-Privilege with the pre-defined roles; custom roles allow for more granular configuration. Obviously, Custom roles must be actively created and applied to projects or at organization levels.

Service Accounts are email identified authentication and authorized to a Google role for access to API services, such as VMs or Applications. By using the same Service Account handed to a group of VMs or Apps, Authorization changes can be made to a single SA, but applied to all its users. SAs can be used to apply Least Privilege effectively between projects and disable potential access mistakes.

GCP Interaction⌗

Access methods:

- Web UI

- CLI SDK

- Mobile App

- Web APIs

Web API⌗

RESTful API using JSON and OAuth 2.0, must be enabled via the Google Cloud Web Console. Have quotas and limits, so glhf. APIs Explorer enables searching the vast array of APIs.

Two Client libraries:

Cloud Client Library- The ideal, open source, hand built libraries

Google API Client Libraries- Generated client libraries for every Google API. Covers all APIs, but less desireable usability.

Cloud Launcher⌗

Prepackaged software systems which can be automagically deployed into your GCP project’s resources. Can be configured/modified before launch, security updates to the deployed software are up to the owners.

GCP VM Worlds⌗

VPC Networking⌗

- Networks and subnets can span across regions/zones.

- Changes can be made to configuration which will no disrupt the IP addresses of existing VMs.

Cloud Load Balancing⌗

-

Direct traffic to a single, global anycast IP address

-

Traffic goes over the Google Backbone from closest point of presensce to the requesting User.

-

Backends are selected based on Load.

-

Only healthy backends receive traffic.

-

No pre-warming is required.

-

Global HTTP(s): Layer 7 load balancing based on load. URL Routing capable.

-

Global SSL Proxy: Layer 4 load balancing of non-HTTPs SSL traffic based on load, for specific port numbers.

-

Global TCP Proxy: Layer 4 load balancing of non-SSL TCP traffic, supported on specific port numbers.

-

Regional: load balancing of any network protocol and port number.

-

Regional Internal: Load balancing inside a VPC, used for internal routing of applications.

Google DNS enables customers to use 8.8.8.8. Allows controlling DNS Zones and records around the world.

VPNs⌗

- VPN(IPSec + Cloud Router): Secure multi Gbps connection over VPN tunnels

- Direct Peering: Private connection between customers and Google for hybrid cloud workloads

- Carrier Peering: Connection through the largest partner network of service providers.

- Dedicated Interconnect: Connect NX 10G transport circuits for private cloud traffic to Google Cloud at Google POPs.

Peering not covered by Google Service level agreement, Dedicated Interconnect uses approved hardware and is covered by SLA.

Storage⌗

Cloud Storage⌗

Fully managed scaling object storage with high durability and availability. Addressed by URLs, but it is not a filesystem for OSes. Organized into Buckets, which are immutable. Data encryption at rest, data in transit is encrypted by default.

ACLs allow finer controls to access to buckets. Two components: Scope(who) and a Permission(action).

Cloud storage versioning, enable history of modifications of objects. Cloud storate lifecycle management policies; eg delete revisions > 365 days old.

Cloud storage Interactions.⌗

-

Multi-Region & Regional: high performance object storage.

-

Nearline & Coldline: backup and archival storage.

-

Regional storage for specific region: eg US Central, EU West, Asia East, etc

-

Multi Region storage: US, EU, Asia, separated by > 160km

-

Nearline storage: Low cost, highly durable, for infrequent access data. ~once/month

-

Coldline storage: low cost, highly durable, for disaster recovery, at most 1/per year. Higher per operation costs, minimum 90 day storage.

-

Nearline + Coldline charges per GB read.

Transferring⌗

- Online Transfer: SDK, Web UI drag & drop

- Storage Transfer Service: Scheduled, managed bach transfers from other clouds or from an HTTPS endpoint.

- Transfer Appliance: Licensed Google server you connect on Prem and then ship back to GCP with loaded data.

- GCP Products R/W to Storage: BigQuery, AppEngine, Compute Engine, Cloud SQL

BigTable⌗

Google’s NoSQL big data database service…Wide-column(Sparsely population friendly) database service for terabyte applications. Each item in the DB can be looked up by a single key pointing to a set of columns.

High throughput R/W, good choice for operational and analytical querying. HBase API compatible.

- Scalability: better than HBase, just increase machine count, low maintenance. Data is encrypted at rest. BT is the same service which powers most of Google.

- Bigtable Interaction: Application API, Streaming(Cloud dataflow streaming, Storm, ), Batch Processing(Hadoop MR, Spark, DataFlow)

Cloud SQL & Spanner⌗

Schema and Transaction centered databases. Enforced transactions for requested operations.

CloudSQL: MySQL or PostgreSQL(in beta) engines available. Provides replica services like read, failover, and external replicas. Backup data with on-demand or scheduled backups. Secured with firewalls, encrypted data at rest. Accessible by other GCP services.

Spanner: horizontally scalable cluster, transaction guarantees at a global scale, schemas, automatic replication, HA, Pedabytes, handles data sharding, strong consistency at global scale. Finance and inventory applications.

Cloud Datastore⌗

Cloud Mongo… Highly scalable NoSQL database choice for AppEngine storage. Handles sharding and replication for HA and durability under load. Offers transactions that affect multiple rows. Free for small use cases.

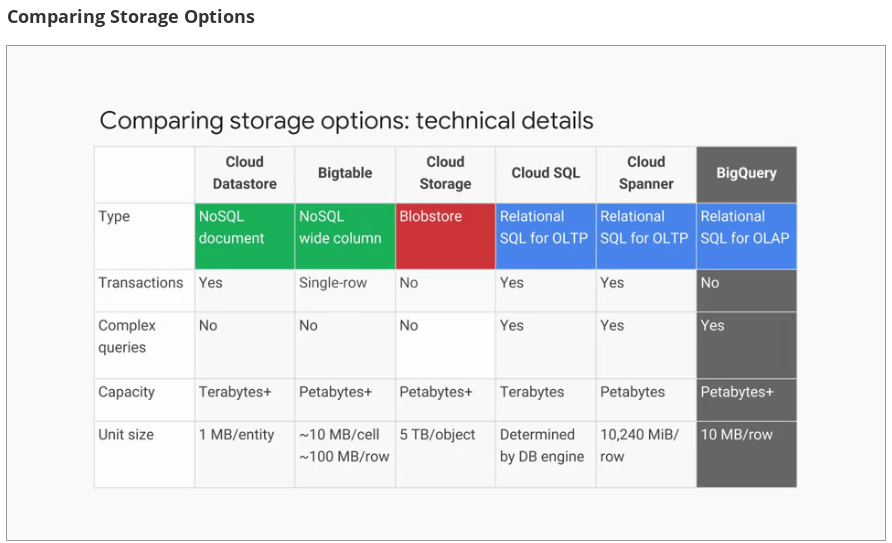

Datastore Comparison⌗

Containers, Kubernetes, & GKE⌗

GKE sits between IaaS and PaaS.

Reason for existence⌗

Scaling an app with VMs which all run a full OS, which isn’t usually needed. Containers give independent scalability of workloads like a PaaS environment, and an abstraction layer of the operating system and hardware, like a IaaS env.

Containers scale like PaaS, but same flexibility of IaaS. Applications can be scaled with communication between each other with a network fabric.

Docker is the common Container Image creation tool…

GKE⌗

Kubernetes as a managed service in the cloud.

GKE clusters can be customized, and they support different machine types, numbers of nodes and network settings.

- Pod: Smallest deployable unit in Kubernetes.

- Each pod gets a unique IP address.

- Can contain multiple containers.

- Deployment: Group of Pod replicas.

- Keeps pods running even if some fail.

- By default only accessible within the cluster.

- Service: Kubernetes fundamental representation for Load Balancing.

- A service groups a set of pods together and provides a stable endpoint for them.

- GKE enables External Load Balancers to point to a Kubernetes Service.

- Pods' IP addrs move, Service creates a single endpoint for routing to them.

kubectl autoscale based on different criteria.

kubectl get pods -l "app=nginx" -o yaml creates a YAML file which can be edited and then reapplied to k8s.

Deployment update strategy allows pods to be upgraded without service interruption.

Anthos (Hybrid & Multi-cloud computing)⌗

To summarize, it allows you to keep parts of your systems infrastructure on-premises while moving other parts to the Cloud, creating an environment that is uniquely suited to your company’s needs.

Take advantage of the flexibility, scalability, and lower computing costs offered by cloud services.

Anthos is a hybrid and multi-cloud solution powered by the latest innovations in distributed systems, and service management software from Google.

Anthos

- ‘Rests’ on kubernetes and GKE for On-Prem deployment.

- Centralized management via centralized control plane.

- Policy based application lifecycle delivery across hybrid and multi-cloud environments.

- Rich monitoring tools across all potential environments.

** GKE On-Prem**

- Turn-key production grade Conformed K8s version with best practices configuration pre-loaded.

- Easy upgrade path to latest k8s released approved by Google.

- Accesses Cloud Build, container registry, audit logging, & more

- Integrates with Istio, Knative, and Marketplace Solutions.

- Same configurations from the standard GKE, so write once deploy anywhere.

- Anthos + Istio Open Source service mesh monitory all layers of communication bewteen clusters.

Stackdriver offers a fully managed logging, metrics collection, monitoring dashboarding, and alerting solution that watches all sides of your hybrid on multi-cloud network

- Anthos Configuration Management(git; Policy Repository) provides a single source of truth for your clusters' configuration.

- K8s configuration lives in the cloud for HA.

- Enables single code commit -> makes changes.

App Engine⌗

For just focusing on the code. PaaS manages hardware and networking infrastructure to deploy your code… Provides built in cloud services that most web apps need. (No)SQL DBs, in-memory caching, load balancing, health checks, logging, and authentication for users.

App engine automatically scales web services, so you only pay for resources utilized. Perfect for highly variable or unpredictable web applications. like mobile backends.

App Engine offers two environments: Standard and Flexible.

AE: Standard⌗

Simpler to deploy, some free daily usage quotas for low traffic apps which might incur no costs.

Runtimes: Java, Python, PHP, Go supported by Standard. Enforces ‘sandbox’ restrictions on code which is run. software construct that's independent of the hardware, operating system, or physical location of the server it runs on. This enables App engine to scale the code with precise tuning, but imposes constraints.

Constraints: No local Filesystem for scratch data, data must be persisted to Database, all requests have a 60s timeout, no third party software.

App Engine SDK for development and testing, then use the SDK to deploy as well. User’s application can make direct call to GCP Services: Data Services, Logging, Launch Actions directly like Task Scheduler.

AE: Flexible⌗

Flexible Environment manages your Containers on Compute Engine VMs that GCP manages and Updates. This gets around the limitaions of Standard AE.

- Build & Deploy containerized Apps

- No Sandbox Constraints

- Still have access to App Engine APIs

- Geographical control of deployment

Flexible environment lets you * SSH into the virtual machines on which your application runs * Use local disk for scratch base * Install third-party software * Make calls to the network without going through App Engine

Flexible Environment cannot drop to zero charge due to resource usage.

App Engine environment treats containers as a means to an end, but for Kubernetes Engine, containers are a fundamental organizing principle.

Flexible is kind of the bastard in between K8s and App Engine Std.

Cloud Endpoints & Apigee Edge⌗

What is an API:

developers structure the software they write so that it presents a clean, well-defined interface that abstracts away needless details and then they document that interface.

Google Cloud platform provides two API management tools.

Cloud Endpoints⌗

Deployed via proxy in-front of your service.

- Control AuthZN access and validate calls with JWTs || Google API keys

- Generates client libraries.

- Supports App Engine Flex, K8s Engine, and Compute Engine :: Clients: Android, iOS, JS

Apigee Edge⌗

Useful to deconstruct legacy applications and break them apart.

- Platform for making APIs available to your customers and partners

- Contains analytics, monetization, and a developer portal

- Business analytics, and billing

Development In Cloud⌗

developers developers developers…

Cloud Source Repositories GCP Hosted Git instances for a Project. Reduces management cost of Git.

Any number of private Git repositories, organize code for a project.

Cloud Functions⌗

- Create single-purpose functions that respond to events without a server or runtime.

- No more provisioning servers/endpoints for simple events.

- Only pay for when functions run, in 100ms intervals.

- Written in JS; execute in a managed Node.JS environment in GCP

- Define triggers, which launch the JS functions.

- Use cloud functions to enhance existing applications without having to worry about scaling.

Deploying Infrastructure as Code⌗

Reduce work of managing imperative environment build process.

GCP Deployment Manager Infrastructure Management Service using Declarative code

- Provides Repetable Deployments

- Create a .yaml template describing your environment Deployment Manager to create resources.

- Commit cloud templates into code repositories.

- Executes necessary actions to create the environment the template file specifies.

Proactive Instermentation⌗

- Stackdriver is GCP’s tool for montioring, logging, and diagnostics.

Stackdriver gives you access to many different kinds of signals from your infrastructure platforms, virtual machines, containers, middleware and application tier, logs, metrics and traces

Stackdriver Core Components

- Monitoring: Healthchecks, service metrics, uptime, dashboard, alerts

- Logging: View, filter, search, log count metrics, export to other Data services

- Trace:

- Error Reporting: Tracks and groups errors from Applictaions, notifies on new errors

- Debugging: Match production errors to source code, view application state w/o debugging statements

- Profiler(beta)

Big Data…Solutions⌗

Google knows, every company will be a data company. Because making the fastest and best use of data is a critical source of competitive advantage. Google Cloud provides a way for everybody to take advantage of Google’s investments in infrastructure and data processing innovation.

Integrated Serverless Platform

Pay for the services you need.

Cloud Dataproc⌗

Managed ‘Hadoop’ toolchain providing Map/Reduce data processing of Spark, Pig, and Hive Apache products. Built on Compute Engine VMs, easily scaled up and down, customize versions, monitored with Stackdriver.

Running jobs in GCP reduces costs by only paying for per/second pricing of compute resources used. Can use pre-emptible instances too.

Spark + SparkSQL to do querying, and Spark Machine Learning Libraries.

Cloud Dataflow⌗

Processes data using Compute Engine Instances, clusters sized and automated scaling. Develop and execute a big range of data processing patterns. Dataflow automates management of compute resources required, freeing operational tasks.

- extract

- transform

- load batch computation

- continuous computation

Dataflow Pipelines flow data from source through Transformation steps. Each transformation manages its resources needed automatically.

Automated and optimized worked partitioning built in. No hot-spot data loads.

Use Cloud Dataflow for:

- ETL pipelines to move, filter, enrich, and shape data

- Data analysis, batch computation or continuous computation using streaming

- Orchestration: Create pipelines taht coordinate services, including external services

- Integrates with GCP services: Cloud Storage, Cloud Pub/sub, bigQuery and Bigtable

- OS Java & Python SDKs

BigQuery⌗

Fully managed petabyte scale data analytics warehouse. Near real-time interactive analysis of massive datasets(100s of TB) using SQL like syntax. No cluster maintenance required, pay as you go model.

Easy to load data via cloud storage or data store, or stream at 100k rows/s. Dataproc Dataflow, hadoop, and spark can also feed the BigQuery lake.

BigQuery is globaly available. Able to share datasets with others who pay for their own querying costs.

Pricing is separated between Data and Querying. Pay only for storage and processing time used. Automatic discount for long term storage(90 days -> discount)

Pub/Sub⌗

Simple, reliable, scalable foundation for stream analytics for independent services to Send and Receive messages. “At least once delivery” at low latency. On-demand scalability for 1million/msg/s and higher controlled by quotas.

- Supports many-to-many asynchronous messaging.

- Application components make pus/pull subscriptions to topics

- Includes support for offline consumers(message queuing)

Cloud Datalab⌗

Interactive data exploration in large datasets. Only pay for resources you use.

- Interactive tool for large-scale data exploration, transformation, analysis, and visualization

- Integrated, open source

- Built on jupyter Python environment

- Analyze data in BigQuery, compute engine, and cloud storage using Pythong, SQL, & JS

- Easily deploy models to BigQuery

Machine Learning APIs⌗

The Google Machine Learning Platform is now available as a cloud service so that you can add innovative capabilities to your own applications.

Cloud Machine Learning Platform provides modern machine learning services with pre-trained models and a platform to generate your own tailored models.

-

TensorFlow to build and run Neural Network Models

- Can use TPUs for high performance compute

-

Fully Managed Machine Learning services

- Integrated with BigQuery and Cloud Storage

-

Pre-Trained machine learning models built by Google

- Speech: stream results in real time, detects 80 languages

- Vision: Identify objects, landmarks, text, and content

- Translate: Language translation + detection

- Natural language: structure + meaning of text

-

Structured Data

- Classification and regression

- Recommendations

- Anomaly detections

-

Unstructured Data

- Image & video analytics

- Text analytics

Machine learning APIs⌗

Cloud Vision API⌗

Analyze images with simple Rest API, with fast categorization and content analysis. Built on pre-trained machine learning models.

- Detect inappropriate content

- Analyze sentiment

- Extract text

Cloud Natural Language API⌗

- Can return text in real time

- Highly accurate, even noisy environments

- Access from any device/service

Syntax analysis, identify nouns, verbs, adjectives and calculate relationships among words. Understand the overal sentiment expressed in a block of text. Identify people, organizations, locations, events, products, and media from text.

Multiple languages: English, Spanish, and Japanese.

Cloud Translation API⌗

Provides a simple, programmatic interface for translating an arbitrary string into a supported language. When you don’t know the source language, the API can detect it.

Cloud Video Intelligence API⌗

lets you annotate videos in a variety of formats. It helps you identify key entities - that is, nouns - within your video and when they occur. You can use it to make video content searchable and discoverable.

Review⌗

In this short module, I'll look back on what we covered in this course.

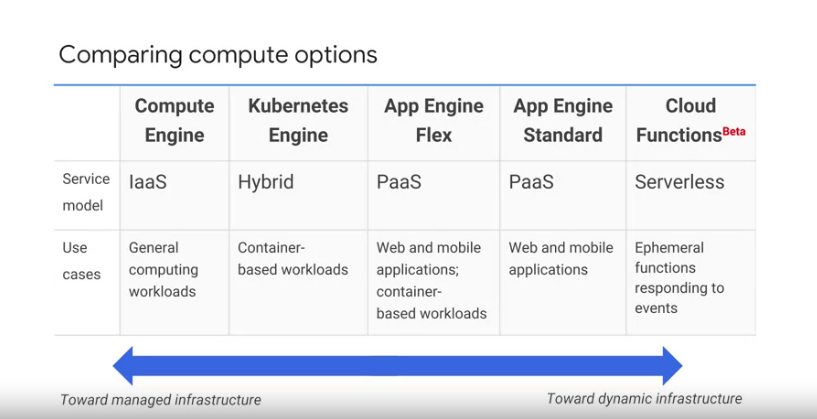

Remember the continuum that this course discussed at the very beginning?

The continuum between managed infrastructure and dynamic infrastructure.

GCP's Compute Services are arranged along this continuum,

and you can choose where you want to be on it.

Choose Compute Engine if you want to deploy your application

in virtual machines that run on Google's infrastructure.

Choose Kubernetes Engine if you want instead to deploy

your application in containers that run on Google's infrastructure.

In a Kubernetes cluster you're defining control.

Choose App Engine instead if you just want to focus on your code,

leaving most infrastructure and provisioning to Google.

App Engine flexible environment lets you use any runtime you want,

and gives you full control of the environment in which your application runs.

App Engine standard environment lets you choose from a set of standard

runtimes and offers finer-grained scaling and scaled to zero.

To completely relieve yourself from the chore of managing infrastructure,

build or extend your application using Cloud Functions.

You supply chunks of code for business logic,

and your code get spun up on demand in response to events.

GCP offers a variety of ways to load balance inbound traffic.

Use Global HTTP(S) load balancing to put

your Web application behind a single anycast IP to the entire Internet.

It load balances traffic among all your backend instances in regions around the world.

And it's integrated with GCP's Content Delivery Network.

If your traffic isn't HTTP or HTTPS,

you can use the global TCP or SSL Proxy for traffic on many ports.

For other ports or for UDP traffic,

use the regional load balancer.

Finally, to load balance the internal tiers of a multi-tier application,

use the internal load balancer.

GCP also offers a variety of ways for you to interconnect

your on-premises or other cloud networks with your Google VPC.

It's simple to set up a VPN and you can use Cloud Router to make it dynamic.

You can also peer with Google,

at its many worldwide points of presence either directly or through a carrier partner.

Or if you need a service level agreement and can adopt one of

the required network typologies, use Dedicated Interconnect.

Consider using Cloud Datastore if you need to store structured objects,

or if you require support for transactions and SQL-like queries.

Consider using Cloud Bigtable if you need to store

a large amount of single-keyed data, especially structured objects.

Consider using Cloud Storage if you need to store immutable binary objects.

Consider using Cloud SQL or Cloud Spanner if you need

full SQL support for an online transaction processing system.

Cloud SQL provides terabytes of capacity while

Cloud Spanner provides petabytes and horizontal scalability.

Consider BigQuery if you need interactive querying in

an online analytical processing system with petabytes of scale.

I'd like to zoom into one of those services we just discussed,

Cloud Storage and remind you of its four storage classes.

Multi-regional and regional are the classes for warm and hot data.

Use Multi-regional especially for content that's being served to a global Web audience.

And use Regional for working storage for compute operations.

Nearline and Coldline are the classes for,

as you'd guess, cooler data.

Use Nearline for backups and for infrequently accessed content.

And use Coldline for archiving and disaster recovery.